By Regulation & Policy Team | AI Regulation, Data Privacy, International Law

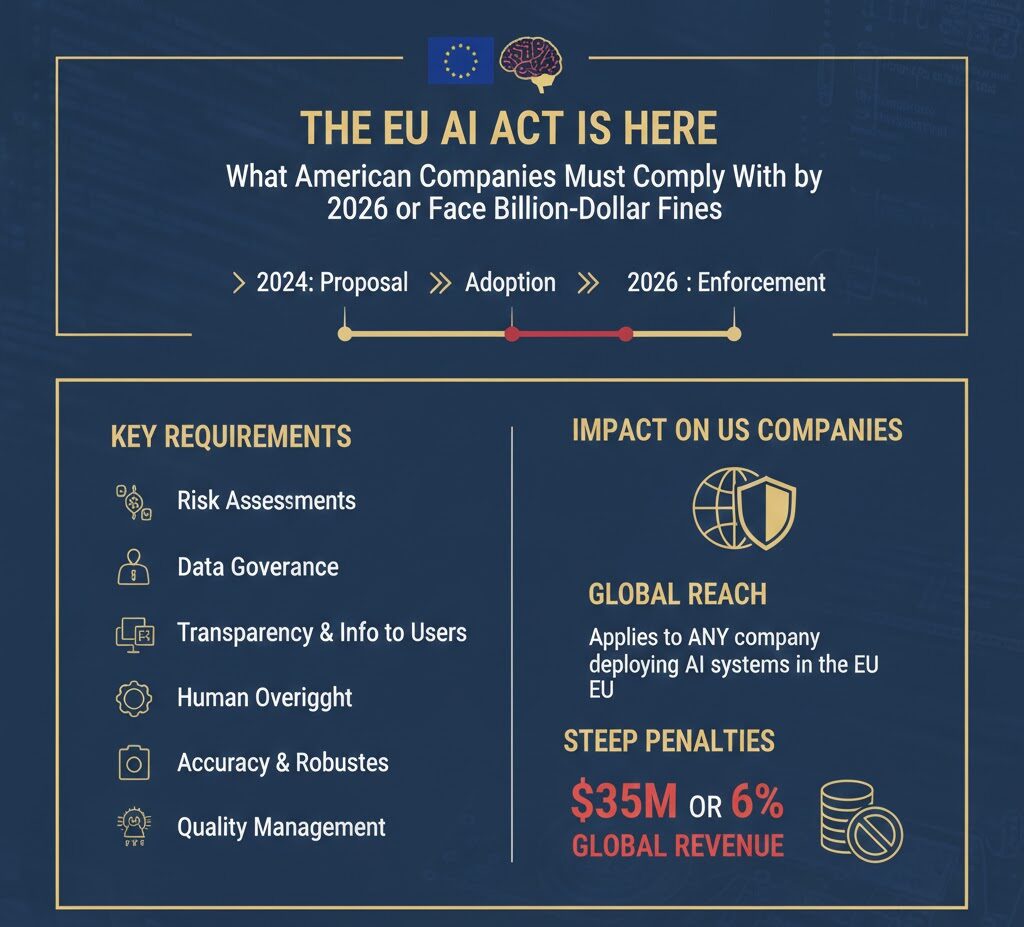

If you think this is just another European regulation you can ignore, you are catastrophically mistaken. The EU AI Act applies to any American company whose AI systems affect EU residents—whether you’re targeting European markets or simply have EU users stumbling across your platform. A SaaS company in Austin, a fintech startup in New York, an e-commerce platform in Seattle: all are now within Brussels’ regulatory crosshairs.

The countdown to August 2026—when high-risk AI obligations become fully enforceable—is ticking. This is your roadmap to compliance, or your warning of the financial apocalypse awaiting those who fail to prepare.

The Penalty Structure: Why This Makes GDPR Look Tame

Remember when GDPR fines seemed terrifying? The EU AI Act makes them look like parking tickets.

| Violation Category | Maximum Fine | What It Covers |

|---|---|---|

| Prohibited AI Practices | €35M or 7% of global turnover | Social scoring, manipulative AI, untargeted biometric scraping, emotion recognition in workplaces |

| High-Risk AI Non-Compliance | €15M or 3% of global turnover | HR/recruiting AI, credit scoring, medical devices, inadequate risk management or human oversight |

| Transparency Violations | €7.5M or 1% of global turnover | Failure to disclose AI interaction, deepfake labeling, incorrect information to regulators |

| GPAI Model Violations | €15M or 3% of global turnover | Systemic risk model violations, inadequate documentation, failure to report incidents |

Source: Article 99, EU AI Act; European Commission guidance 2025

Let those numbers sink in. A mid-sized tech company with $500 million in global revenue could face $35 million in fines for a prohibited AI practice. A Fortune 500 giant with $50 billion in revenue? That’s a potential $3.5 billion penalty—nearly double the largest GDPR fine ever issued (€1.2 billion to Meta).

The EU AI Act doesn’t just match GDPR’s extraterritorial ambition. It exceeds it. And unlike GDPR’s 4% maximum penalty, the AI Act’s 7% ceiling creates an entirely new category of existential financial risk.

The Extraterritorial Trap: How U.S. Companies Get Snared

Article 2 of the EU AI Act contains the same jurisdictional weapon that made GDPR a global compliance nightmare. The Act applies to:

- Providers placing AI systems on the EU market, regardless of establishment location

- Deployers (users) of AI systems within the EU

- Providers and deployers in third countries (including the U.S.) where the AI system’s output is used in the Union

That final clause is the killer. It means a Texas-based SaaS company using AI to screen job applicants could face EU AI Act obligations if any EU resident applies for a position—even if the company isn’t actively recruiting in Europe. An American fintech platform using AI for credit decisions triggers compliance if a single EU citizen accesses the service.

As noted by Mayer Brown’s legal analysis, “A software company in Texas that sells an AI-driven resume-screening tool to a single French employer may now need to classify its AI system under the EU AI Act’s risk tiers, conduct conformity assessments, maintain technical documentation, conduct post-market monitoring, and implement human oversight consistent with EU-defined standards.”

The Brussels Effect is real. Just as GDPR forced global privacy compliance, the EU AI Act is establishing the de facto international standard for AI governance. American companies face a choice: comply with EU standards, or surrender access to a market of 450 million consumers with $17 trillion in GDP.

The Risk-Based Framework: What Gets Regulated How

The EU AI Act operates on a four-tier risk classification system. Understanding where your AI systems fall is the first step to compliance.

Tier 1: Unacceptable Risk (Prohibited)

These AI practices are banned outright as of February 2, 2025. If you’re doing any of the following, stop immediately:

- Subliminal manipulation: AI systems deploying techniques beyond consciousness to materially distort behavior

- Exploitation of vulnerabilities: AI targeting individuals based on age, disability, or social/economic situation to distort behavior

- Social scoring: Public authorities evaluating or classifying people based on social behavior or personal characteristics

- Predictive policing: AI predicting criminal behavior based solely on profiling or personality traits

- Untargeted facial recognition scraping: Creating facial recognition databases by scraping images from internet or CCTV

- Emotion recognition in workplaces/schools: Inferring emotions in these contexts (with narrow medical/safety exceptions)

- Biometric categorization: Using biometric data to infer race, political opinions, religious beliefs, or sexual orientation

- Real-time remote biometric identification: In publicly accessible spaces for law enforcement (with limited exceptions)

Penalty for violation: Up to €35 million or 7% of global turnover. These are criminalized practices, not mere compliance violations.

Tier 2: High Risk (Strict Compliance Requirements)

High-risk AI systems face the Act’s most stringent obligations. These include:

- AI systems used as safety components in regulated products (medical devices, vehicles, machinery)

- AI in critical infrastructure (transport, water, gas, electricity)

- AI in education and vocational training (admissions, evaluations)

- AI in employment (recruiting, promotion, termination, performance evaluation)

- AI in essential services (credit scoring, insurance, benefits)

- AI in law enforcement (evidence evaluation, risk assessment)

- AI in migration and border control

- AI in administration of justice and democratic processes

Compliance deadline: August 2, 2026 (with some provisions delayed to August 2027)

Key obligations for high-risk AI:

| Requirement | What It Means |

|---|---|

| Risk Management System | Continuous iterative process running throughout AI system lifecycle; identification and analysis of known and foreseeable risks |

| Data Governance | Training, validation, and testing datasets must meet quality criteria; appropriate data governance and management practices |

| Technical Documentation | Extensive documentation proving compliance; must be drawn up before system is placed on market and kept up-to-date |

| Record-Keeping | Automatic logging of events while system is operating; minimum 6-month retention; must ensure traceability |

| Transparency | Clear instructions for use; disclosure that AI is being used; information about capabilities and limitations |

| Human Oversight | Natural persons must be able to oversee AI operation; ability to intervene, override, or reverse decisions |

| Accuracy, Robustness, Security | Appropriate levels of accuracy; resilience to errors, faults, inconsistencies; protection against unauthorized access |

| Conformity Assessment | Internal assessment or third-party audit before placing on market; CE marking required |

| Registration | Registration in EU database for high-risk AI systems before first use |

| Post-Market Monitoring | Systematic collection and review of experience from use; continuous monitoring for risks and incidents |

Penalty for non-compliance: Up to €15 million or 3% of global turnover

Tier 3: Limited Risk (Transparency Obligations)

These AI systems face lighter transparency requirements:

- Chatbots and AI systems interacting with humans

- AI-generated content (deepfakes)

- Emotion recognition or biometric categorization systems

Key obligation: Users must be informed they are interacting with AI, and AI-generated content must be labeled as such.

Compliance deadline: August 2, 2025 for GPAI; ongoing for other limited-risk systems

Penalty for violation: Up to €7.5 million or 1% of global turnover

Tier 4: Minimal Risk (Voluntary Codes)

AI systems not falling into other categories (spam filters, AI-enabled video games, inventory management) face no mandatory requirements but are encouraged to follow voluntary codes of conduct.

The Implementation Timeline: Critical Dates for U.S. Companies

The EU AI Act’s staggered implementation creates a compliance gauntlet. Missing any deadline triggers penalties.

February 2, 2025: Prohibited Practices Ban (ACTIVE NOW)

The first enforcement milestone has already passed. Any American company continuing prohibited AI practices affecting EU residents is already in violation and accumulating potential penalties.

Immediate action required: Audit all AI systems for prohibited practices. If found, discontinue immediately and document remediation.

August 2, 2025: GPAI Governance Obligations

General-Purpose AI models (like GPT-4, Gemini, Llama) face specific requirements:

- Technical documentation preparation

- EU copyright law compliance policies

- Detailed summaries of training data

- Cooperation with EU Commission

Models with “systemic risk” (trained with >10^25 FLOPs or designated by Commission) face additional obligations: adversarial testing, risk assessment, incident reporting, cybersecurity measures.

February 2, 2026: High-Risk Guidelines

The European Commission must publish guidelines specifying how to comply with high-risk AI requirements, including practical examples of high-risk vs. non-high-risk systems. Note: As of February 2026, the Commission has reportedly missed this deadline, creating compliance uncertainty.

August 2, 2026: High-Risk Compliance Deadline (THE BIG ONE)

All high-risk AI systems must be fully compliant. This includes:

- HR/recruiting AI

- Credit scoring and financial AI

- Medical device AI

- Educational AI

- Critical infrastructure AI

For U.S. companies: If your AI system affects EU residents and falls into high-risk categories, you must have completed conformity assessments, technical documentation, human oversight protocols, and EU representative appointment by this date.

Penalty for non-compliance: Up to €15 million or 3% of global turnover

August 2, 2027: Full Implementation

Remaining high-risk AI systems (those embedded in regulated products like toys, medical devices, vehicles) must comply. All GPAI models placed on market before August 2025 must meet requirements.

Industry-Specific Compliance Requirements

Human Resources and Recruiting

AI used for recruiting, screening, performance evaluation, promotion, or termination is classified as high-risk. American companies using AI for any HR function affecting EU candidates or employees must comply by August 2026.

Specific obligations:

- Worker notification before implementation

- Informing workers’ representatives where applicable

- Human oversight by individuals with appropriate competence and authority

- Monitoring for discrimination and adverse impacts

- Automatic logging with 6-month minimum retention

- Fundamental Rights Impact Assessment for certain deployers

According to Ogletree Deakins’ analysis, “A U.S. company using an AI-powered résumé screener for a global applicant pool could be covered if that system ranks or filters EU-based candidates.”

Financial Services

Credit scoring, fraud detection, and AI underwriting systems are high-risk. U.S. financial institutions must ensure:

- Models are explainable and transparent

- Bias testing and monitoring protocols

- Human oversight of credit decisions

- Algorithmic impact assessments

- Alignment with both EU AI Act and U.S. fair lending laws

Healthcare

AI diagnostic tools, clinical decision support systems, and software embedded in medical devices face high-risk classification. Compliance requires:

- Conformity assessment (likely third-party for medical devices)

- CE marking

- Clinical evaluation and validation

- Post-market surveillance

- Integration with existing medical device regulations

Technology and SaaS

General-purpose AI models and platforms face GPAI obligations. Systemic risk models (high compute, high impact) face enhanced requirements. All tech companies must evaluate:

- Does our AI model qualify as GPAI?

- Does it pose systemic risk (>10^25 FLOPs)?

- Are we providing or deploying high-risk AI?

- Do we need an EU authorized representative?

The Compliance Action Plan: What U.S. Companies Must Do Now

Phase 1: Immediate (Q1 2025)

1. AI System Inventory

Catalog every AI system in your organization. Document:

- Purpose and functionality

- Data inputs and outputs

- Whether outputs affect EU residents

- Risk classification under EU AI Act

- Current compliance gaps

2. Prohibited Practices Audit

Immediately cease any prohibited AI practices affecting EU residents. Document remediation efforts.

3. Legal Assessment

Determine if your company qualifies as provider, deployer, or both. Assess extraterritorial exposure.

Phase 2: Short-Term (Q2-Q3 2025)

4. Appoint EU Authorized Representative

Non-EU providers must appoint an authorized representative established in the EU. This representative must have access to compliance documentation and cooperate with authorities.

5. Establish AI Governance Framework

Create internal policies for:

- Risk management and assessment

- Data governance and quality

- Human oversight protocols

- Incident reporting procedures

- Record-keeping and documentation

6. Vendor Diligence

Review all AI vendor contracts. Ensure providers offer:

- Technical documentation

- Instructions for use

- Compliance warranties

- Audit cooperation commitments

- Incident notification procedures

Phase 3: Medium-Term (Q4 2025-Q2 2026)

7. Technical Documentation

For high-risk AI, prepare comprehensive technical documentation including:

- System architecture and design

- Training methodologies and data

- Validation and testing results

- Risk assessment and mitigation measures

- Performance metrics and limitations

8. Human Oversight Implementation

Design and implement meaningful human oversight:

- Assign qualified oversight personnel

- Create intervention protocols

- Establish override authority

- Document oversight activities

9. Conformity Assessment

Conduct internal assessment or engage notified body for third-party assessment. Obtain CE marking for high-risk systems.

10. Registration

Register high-risk AI systems in EU database before first use.

Phase 4: Ongoing (August 2026 and Beyond)

11. Post-Market Monitoring

Establish continuous monitoring for:

- Performance degradation

- Discriminatory outcomes

- Adverse incidents

- Emerging risks

12. Incident Reporting

Report serious incidents to national competent authorities within specified timeframes.

13. Continuous Compliance

Regular audits, documentation updates, and adaptation to regulatory guidance.

The Strategic Implications: Beyond Compliance

The EU AI Act is not merely a regulatory hurdle. It is a strategic inflection point that will reshape competitive dynamics in AI.

The Compliance Moat

Companies that achieve early compliance will gain competitive advantage. The cost and complexity of EU AI Act compliance creates barriers to entry that favor established players with resources to invest in governance, documentation, and legal infrastructure. Startups may find European expansion prohibitively expensive—a dynamic that could entrench incumbents.

The Global Standard

As with GDPR, the EU AI Act is becoming the global template. Brazil, Canada, Japan, and others are developing similar frameworks. U.S. companies building EU AI Act compliance will be well-positioned for emerging regulations worldwide. Those who ignore it will face repeated compliance investments as jurisdictions pile on.

The Innovation Paradox

Strict regulation could theoretically stifle innovation, but early evidence suggests otherwise. The EU AI Act’s regulatory sandboxes and support for SMEs may actually accelerate responsible AI development. Companies that solve for compliance may discover competitive advantages in trust, transparency, and reduced regulatory risk.

The U.S. Response

The EU AI Act is forcing American regulatory action. The patchwork of state AI laws (California, Colorado, New York) is creating compliance complexity that may drive federal preemption. Companies should monitor both EU and U.S. developments, designing controls that satisfy both to avoid fragmented compliance.

The Bottom Line: Act Now or Pay Later

The EU AI Act represents the most significant expansion of global technology regulation since GDPR. Its extraterritorial reach, severe penalties, and complex compliance requirements create existential risk for unprepared American companies.

The February 2025 prohibition deadline has already passed. The August 2026 high-risk compliance deadline is approaching. Companies that delay action risk:

- Penalties of up to 7% of global revenue

- Market exclusion from the EU

- Reputational damage from enforcement actions

- Cascading liability under other regulations

- Competitive disadvantage against compliant rivals

The choice is clear: invest in compliance now, or face billion-dollar consequences later. The EU AI Act is not a distant threat. It is active law with active enforcement. American companies must treat it with the same urgency as any other existential business risk.

The countdown to August 2026 is ticking. The time for action is now.

References and Legal Sources

- European Union (2024). Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonised rules on artificial intelligence. Official Journal of the European Union. https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX:32024R1689

- European Commission (2025). AI Act Service Desk – Article 99: Penalties. https://ai-act-service-desk.ec.europa.eu/en/ai-act/article-99

- White & Case LLP (2024). Long awaited EU AI Act becomes law after publication in the EU’s Official Journal. https://www.whitecase.com/insight-alert/long-awaited-eu-ai-act-becomes-law-after-publication-eus-official-journal

- Ogletree Deakins (2025). The EU AI Act Is Here—What It Means for U.S. Employers. https://ogletree.com/insights-resources/blog-posts/cybersecurity-awareness-month-in-focus-part-iii-the-eu-ai-act-is-here-what-it-means-for-u-s-employers/

- Bond Schoeneck & King PLLC (2025). The EU AI Act: What U.S. Companies Need to Know. https://www.bsk.com/news-events-videos/the-eu-ai-act-what-u-s-companies-need-to-know

- Quinn Emanuel Urquhart & Sullivan (2025). Initial Prohibitions Under EU AI Act Take Effect. https://www.quinnemanuel.com/the-firm/publications/initial-prohibitions-under-eu-ai-act-take-effect/

- IBM (2024). What is the EU AI Act? https://www.ibm.com/think/topics/eu-ai-act

- eyreACT (2025). GDPR vs AI Act: The Missing Compliance Layer. https://www.eyreact.com/gdpr-vs-ai-act-compliance-layer/

Legal Disclaimer: This article is for informational purposes only and does not constitute legal advice. The EU AI Act is complex and evolving. Companies should consult qualified legal counsel for specific compliance guidance. Regulatory deadlines and requirements are subject to change.

Tags: EU AI Act, AI regulation, compliance, GDPR, extraterritorial jurisdiction, penalties, high-risk AI, American companies, legal requirements

Categories: AI Regulation, International Law, Compliance, Technology Policy, Data Privacy

About the Author

InsightPulseHub Editorial Team creates research-driven content across finance, technology, digital policy, and emerging trends. Our articles focus on practical insights and simplified explanations to help readers make informed decisions.